21 - AI Assisted Coding

Sometimes known as "Vibe coding".

LLMs are here to stay. And you as a developer are expected to know how to use them effectively - how to use them to write code, how to use them to debug code, how to use them to learn new concepts, etc.

Some discussions about future of software engineering

- Siim Vene, in estonian

- https://x.com/niconley/status/1966505076849074618?s=46&t=PS1Kx0D2JS9LvarPHKugng

Siim Vene is from SMIT (estonian internal affairs it house. architect. police, border etc)

Tools to use...

Agentic:

- Amazon Q Developer

- Antigravity

- Auggie (Augment CLI)

- Claude Code

- Cline

- Codex

- CodeBuddy Code (CLI)

- Continue

- CoStrict

- Crush

- Cursor

- Factory Droid

- Gemini CLI

- GitHub Copilot

- iFlow

- Kilo Code

- OpenCode

- Qoder

- Qwen Code

- RooCode

- Trae

Vibe:

- Copilot

- Aider

- v0

- Bolt

- Base 44

- Windsurf

- Lovable.ai

- Replit

- and many, many, many more

And my favorite - Chad Ide (first agentic brainrot ide).

https://www.ycombinator.com/launches/OgV-chad-ide-the-first-brainrot-ide

Many of these are VS Code plugins or forks. Many are commercial. Roo, Cline, Aider, Kilo - open source. Many of these tools are specific to one LLM model. Some try to be model agnostic.

But what is common - they all are just convenient prompt engineering tools and good at picking up commands from llm response. Constructs comprehensive prompts, asks for structured response and parses it for final answer or for automatic next request.

All LLMs are stateless (and frozen in their knowledge) - every request starts from scratch. So the prompt is the only thing that defines the context. And context has size limit. Usually in your LLM conversation - context will grow and grow. All user messages and LLM responses are added to context on top of each other.

Prompt engineering is the key to effective use of LLMs. Usually LLMs start to lose quality at about 75% of context size (plus context poisoning). And since pricing is based on tokens (word parts, ca 4 characters) - big contexts are very expensive. So start a new session often - as soon as your task is completed. When you start to add/ask for irrelevant information on top of completed lengthy task - costs will go up and quality will go down.

When you see "compressing context" - system is trying to reduce context size by removing/summarizing irrelevant information. Details will be lost.

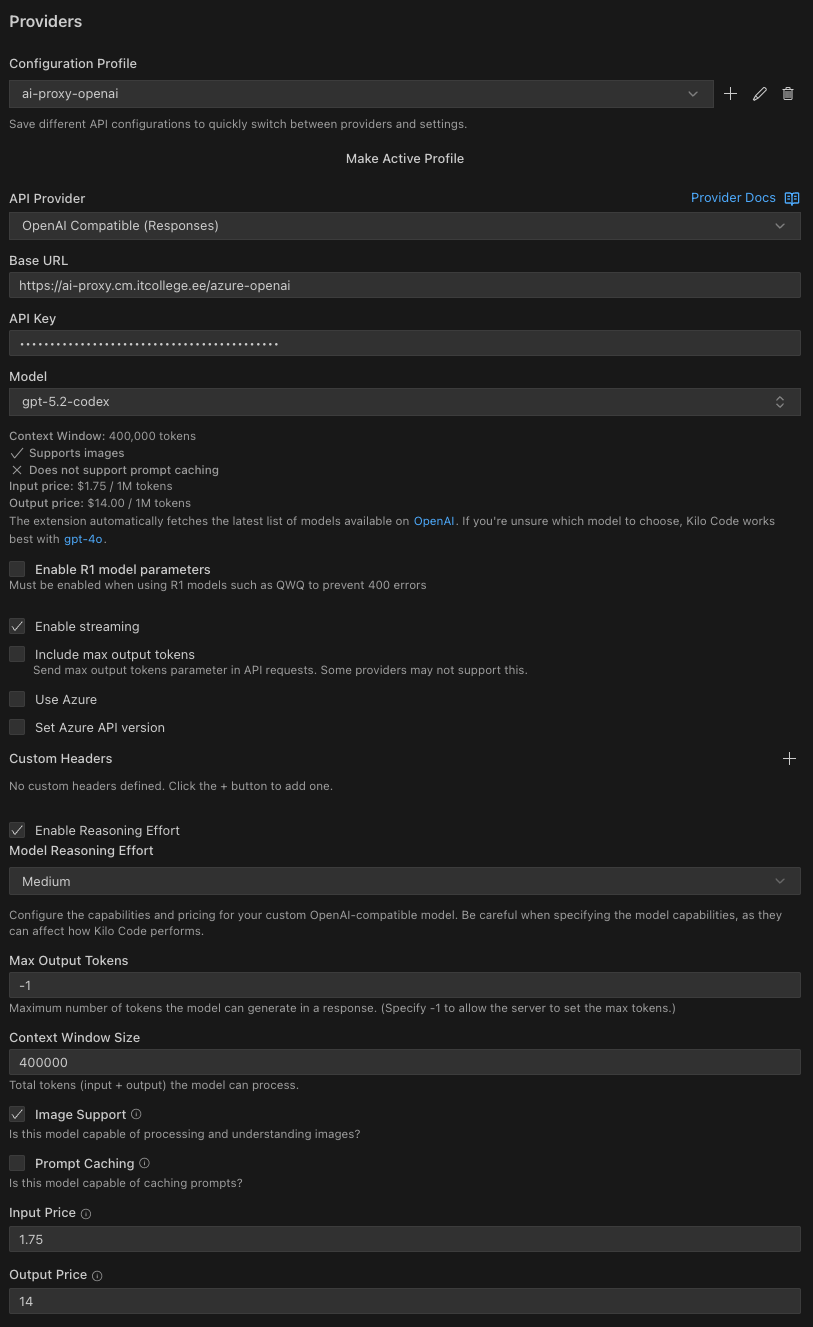

Since in C# dev we are using Rider, there are few options. Currently, we are focusing on Kilo Code - model agnostic, open source, and has decent Jetbrains plugin.

Kilo Code

Kilo code is open-source plugin for VS Code. Model agnostic - you can use almost any LLM provider, including self hosted ones. And Kilo is highly customizable - you can define your own modes/prompts, use MCP tools, index the codebase, etc.

And most important - one of the few that has up-to-date plugin for Jetbrain IDE's (including Rider).

Model to use

Really-really complex decision. Different models are used for different tasks (price, speed, capability).

Currently Anthropic's Opus 4.6 seem to be the best. But expensive.

Then gpt5.3 and gemini 3.

And then many open weight/source models - GLM air, Qwen, Minimax etc.

And many different providers - some models are accessible only directly, some are accessible via proxy provider (openrouter, kilo code). Different pricing models - monthly subscription, pay per use, etc.

Self hosting

If you have enough resources - you can host your own LLM. There are many options for hosting engine - Ollama, LM Studio, etc. Lots of resources required - fast RAM (gpu). Minimal requirements - ca 48+ gb of free GPU/shared memory. (I'm on MacBook pro m1 max - unified 64gb ram. Main local modal - Qwen3-coder-next 4bit MLX).

Api access vs subscription

Some providers offer api access - you can use their models in your own code or tooling. Some offer subscription (usually cheaper) - you can use their models only in their own interface.

Taltech provided LLM access

Visit ai-proxy.cm.taltech.ee

Configure provider in Kilo Code settings as OpenAi Compatible and set model name manually.

Kilo Code Basics

Modes

Kilo code provides several modes (customizable) for different tasks.

- Ask

- Code

- Debug

- Architect

- Orchestrator

- Review

Ask

- A knowledgeable technical assistant focused on answering questions without changing your codebase.

- Limited access: read, browser, mcp only (cannot edit files or run commands)

- Code explanation, concept exploration, and technical learning

- Optimized for informative responses without modifying your project

Code

- A skilled software engineer with expertise in programming languages, design patterns, and best practices

- Full access to all tool groups: read, edit, browser, command, mcp

- Writing code, implementing features, debugging, and general development

- No tool restrictions—full flexibility for all coding tasks

Architect

- An experienced technical leader and planner who helps design systems and create implementation plans

- Access to read, browser, mcp, and restricted edit (markdown files only)

- System design, high-level planning, and architecture discussions

- Follows a structured approach from information gathering to detailed planning

Debug

- An expert problem solver specializing in systematic troubleshooting and diagnostics

- Full access to all tool groups: read, edit, browser, command, mcp

- Tracking down bugs, diagnosing errors, and resolving complex issues

- Uses a methodical approach of analyzing, narrowing possibilities, and fixing issues

Orchestrator

- A strategic workflow orchestrator who coordinates complex tasks by delegating them to appropriate specialized modes

- Limited access to create new tasks and coordinate workflows

- Breaking down complex projects into manageable subtasks assigned to specialized modes

- Uses the new_task tool to delegate work to other modes

- ie. spawns new sessions for each task (with new context)

Review

- An expert code reviewer specializing in analyzing changes to provide structured feedback on quality, security, and best practices

- Access to read, browser, mcp, and when permitted, edit

- Catching issues early, enforcing code standards, accelerating PR turnaround

- Code review before committing, surfacing feedback across performance, security, style, and test coverage

So basically - customized prompt and tool access. You can create your own modes and use specific prompts.

Tools

Kilo code has many tools:

Read Group - Code exploration and analysis

- File system reading and searching

- read_file, search_files, list_files, list_code_definition_names

Edit Group - Code changes and file manipulation

- File system modifications

- apply_diff, delete_file, write_to_file

Browser Group - Web testing and interaction

- Web automation

- browser_action

Command Group - Running scripts, building projects

- System command execution

- execute_command

MCP Group - Specialized functionality through external servers

- External tool integration

- use_mcp_tool, access_mcp_resource

Workflow Group - Context switching and task organization

- Mode and task management

- switch_mode, new_task, ask_followup_question, attempt_completion, update_todo_list

Simple kilo code usage

Start with ask mode, and start to explore the codebase (or ask whatever). Ask natural questions.

For example:

- Open up your solution in Rider, go to Kilo code plugin, select ask mode

What is this solution about?

After several operations this output was produced (2025 demo code, tic-tac-toe):

Based on my analysis of the codebase, this appears to be a Tic-Tac-Toe game implementation with both console and web interfaces, along with configuration management. To provide you with a comprehensive understanding of what this solution is about, I'd like to know: Is there a specific aspect of this solution you'd like me to focus on explaining in more detail, or would you prefer a general overview of the entire system?

Using local modal via lm studio, you can log the model access with:

lms log stream --source model --filter input,output

Model session is here: model_session2.txt

Final result:

## Tic-Tac-Toe Solution Overview

This is a comprehensive Tic-Tac-Toe game implementation with both console and web interfaces, built using C# and .NET technologies. The solution demonstrates modern software architecture principles with a clean separation of concerns across multiple layers.

### Solution Architecture

The solution follows a layered architecture pattern with the following main components:

1. **BLL (Business Logic Layer)** - Contains core game logic and domain models

- `GameBrain.cs`: Main game engine that handles board state, move processing, and win detection

- `GameConfiguration.cs`: Configuration class for game parameters (board size, win condition)

- `ECellState.cs` and `EPlayerType.cs`: Enumerations for game state management

2. **DAL (Data Access Layer)** - Handles data persistence and configuration management

- `IRepository.cs`: Interface defining CRUD operations for game configurations

- `ConfigRepositoryJson.cs`: JSON-based configuration storage implementation

- `ConfigRepositoryEF.cs`: Entity Framework-based database implementation (commented out in main code)

3. **Console Application** - Traditional console interface

- `ConsoleApp/Program.cs`: Main entry point with menu system

- `ConsoleController.cs`: Game loop controller

- `ConsoleUI/Ui.cs`: Console-based user interface

4. **Web Application** - ASP.NET Core Razor Pages implementation

- `WebApp/Program.cs`: Application startup and dependency injection configuration

- Razor Pages for game flow: Index, NewGame, GamePlay

5. **Menu System** - Shared menu infrastructure

- `MenuSystem/Menu.cs`: Menu navigation system

### Main Features and Functionality

- **Multi-Interface Support**: Play the game through both console and web interfaces

- **Configurable Game Settings**: Adjustable board size, win conditions, and game names

- **Game State Management**: Complete tracking of board state, player turns, and win detection

- **Configuration Persistence**: Save/load game configurations in JSON format or database

- **Cross-Platform Compatibility**: Works on Windows, macOS, and Linux

### System Design and Data Flow

The system follows a clear data flow pattern:

1. User interacts through either console or web interface

2. Interface calls the GameController/GamePlay controller

3. Controller instantiates GameBrain with configuration parameters

4. GameBrain processes moves and determines game state

5. Results are returned to the UI for display

6. Configuration data flows through IRepository between UI and storage layers

The solution demonstrates good separation of concerns with clear boundaries between user interface, business logic, and data access layers. It also includes proper error handling and validation mechanisms.

### Technical Implementation Details

- Uses C# 12 with nullable reference types enabled

- Implements Entity Framework Core for database operations (SQLite)

- Features dependency injection for loose coupling

- Includes both synchronous and asynchronous data access patterns

- Follows SOLID principles with clear interfaces and abstractions

NB! LLMs are sycophants. Trained to be positive. They will lie to you.

Simpler example:

list all options in @/BLL/ECellState.cs

Response

Based on the content of BLL/ECellState.cs, the ECellState enum contains the following five options:

1. Empty

2. X

3. O

4. XWin

5. OWin

These are the complete set of enum values defined in the file.

Model session: model_session1.txt

More complex workflow

Switch over to architect mode, to plan new features.

User prompt

Plan to implement all not yet implemented methods in @/DAL/ConfigRepositoryEF.cs

If architect mode is too agressive, edit the prompt to your liking.

1. Do some information gathering (using provided tools) to get more context about the task.

2. You should also ask the user clarifying questions to get a better understanding of the task.

3. Once you've gained more context about the user's request, break down the task into clear, actionable steps and create a todo list using the `update_todo_list` tool. Each todo item should be:

- Specific and actionable

- Listed in logical execution order

- Focused on a single, well-defined outcome

- Clear enough that another mode could execute it independently

**Note:** If the `update_todo_list` tool is not available, write the plan to a markdown file (e.g., `plan.md` or `todo.md`) instead.

4. As you gather more information or discover new requirements, update the todo list to reflect the current understanding of what needs to be accomplished.

5. Ask the user if they are pleased with this plan, or if they would like to make any changes. Think of this as a brainstorming session where you can discuss the task and refine the todo list.

6. Include Mermaid diagrams if they help clarify complex workflows or system architecture. Please avoid using double quotes ("") and parentheses () inside square brackets ([]) in Mermaid diagrams, as this can cause parsing errors.

7. Use the switch_mode tool to request that the user switch to another mode to implement the solution.

**IMPORTANT: Focus on creating clear, actionable todo lists rather than lengthy markdown documents. Use the todo list as your primary planning tool to track and organize the work that needs to be done.**

Context window

The context window includes:

- The system prompt (instructions from Kilo Code).

- Full conversation history (the session).

- The content of any files you mention using @.

- The output of any commands or tools Kilo Code uses.

Context managament - prompt engineering

Often agentic tools do not understand the project fully. Do get better understanding, they try to use tools to gather more info to build up better context.

All this is expensive and slow (and is redone in every session). Plus context windows are limited in size. And usually start to hallucinate at about 75% full.

To avoid this repetetive context buildup - write down specification for project that kilo code can include into every session.

List the code style, libraries used, dev patterns, project descriptions etc.

Usually this is a markdown file in the root of the project, called agents.md or something similar.

AGENTS.md

AGENTS.md is an open standard for configuring AI agent behavior in software projects. It's a simple Markdown file placed at the root of your project that contains instructions for AI coding assistants. The standard is supported by multiple AI coding tools, including Kilo Code, Cursor, and Windsurf.

Think of AGENTS.md as a "README for AI agents" - it tells the AI how to work with your specific project, what conventions to follow, and what constraints to respect.

# Project Name

Brief description of the project and its purpose.

## Code Style

- Use TypeScript for all new files

- Follow ESLint configuration

- Use 2 spaces for indentation

## Architecture

- Follow MVC pattern

- Keep components under 200 lines

- Use dependency injection

## Testing

- Write unit tests for all business logic

- Maintain >80% code coverage

- Use Jest for testing

## Security

- Never commit API keys or secrets

- Validate all user inputs

- Use parameterized queries for database access

Context Mentions

Context mentions are a powerful way to provide Kilo Code with specific information about your project, allowing it to perform tasks more accurately and efficiently.

You can use mentions to refer to files, folders, problems, and Git commits.

Context mentions start with the @ symbol.

Type

- file -

@/path/to/file.tsIncludes file contents in request context - Explain the function in @/src/utils.ts - folder -

@/path/to/folderProvides directory structure in tree format - What files are in @/src/components/? - url -

@https://example.comImports website content - "Summarize @https://docusaurus.io/"

NB! Always start from workspace root in file or folder mentions.

Skills

Agent Skills package domain expertise, new capabilities, and repeatable workflows that agents can use. At its core, a skill is a folder containing a SKILL.md file with metadata and instructions that tell an agent how to perform a specific task.

Example (git-commit skill):

---

name: git-commit

description: Generate descriptive commit messages by analyzing git diffs. Use when the user asks for help writing commit messages or user wants to commit and push changes.

---

# Git Commit Helper

## Quick start

Always execute the full workflow: **stage → commit → push**.

1. **Stage all changes**: `git add .`

2. **Review what's staged**: `git diff --staged` and `git status`

3. **Analyze the diff** and generate a conventional commit message

4. **Commit**: `git commit -m "message"`

5. **Push to remote**: `git push`

All three git operations (`git add .`, `git commit`, `git push`) MUST be executed in sequence. Do not stop after committing — always push.

## Commit message format

Follow conventional commits format:

```

<type>(<scope>): <description>

[optional body]

[optional footer]

```

### Types

- **feat**: New feature

- **fix**: Bug fix

- **docs**: Documentation changes

- **style**: Code style changes (formatting, missing semicolons)

- **refactor**: Code refactoring

- **test**: Adding or updating tests

- **chore**: Maintenance tasks

### Examples

**Feature commit:**

```

feat(auth): add JWT authentication

Implement JWT-based authentication system with:

- Login endpoint with token generation

- Token validation middleware

- Refresh token support

```

**Bug fix:**

```

fix(api): handle null values in user profile

Prevent crashes when user profile fields are null.

Add null checks before accessing nested properties.

```

**Refactor:**

```

refactor(database): simplify query builder

Extract common query patterns into reusable functions.

Reduce code duplication in database layer.

```

## Analyzing changes

After staging with `git add .`, review what's being committed:

```bash

# Stage everything first

git add .

# Show files changed

git status

# Show detailed changes

git diff --staged

# Show statistics

git diff --staged --stat

# Show changes for specific file

git diff --staged path/to/file

```

## Commit message guidelines

**DO:**

- Use imperative mood ("add feature" not "added feature")

- Keep first line under 50 characters

- Capitalize first letter

- No period at end of summary

- Explain WHY not just WHAT in body

**DON'T:**

- Use vague messages like "update" or "fix stuff"

- Include technical implementation details in summary

- Write paragraphs in summary line

- Use past tense

## Multi-file commits

When committing multiple related changes:

```

refactor(core): restructure authentication module

- Move auth logic from controllers to service layer

- Extract validation into separate validators

- Update tests to use new structure

- Add integration tests for auth flow

Breaking change: Auth service now requires config object

```

## Scope examples

**Frontend:**

- `feat(ui): add loading spinner to dashboard`

- `fix(form): validate email format`

**Backend:**

- `feat(api): add user profile endpoint`

- `fix(db): resolve connection pool leak`

**Infrastructure:**

- `chore(ci): update Node version to 20`

- `feat(docker): add multi-stage build`

## Breaking changes

Indicate breaking changes clearly:

```

feat(api)!: restructure API response format

BREAKING CHANGE: All API responses now follow JSON:API spec

Previous format:

{ "data": {...}, "status": "ok" }

New format:

{ "data": {...}, "meta": {...} }

Migration guide: Update client code to handle new response structure

```

## Template workflow

Execute these steps in order — all steps are mandatory:

1. **Stage all changes**: `git add .`

2. **Review changes**: Run `git status` and `git diff --staged` to understand what changed

3. **Check recent commits**: Run `git log --oneline -5` to match the project's commit style

4. **Identify type**: Is it feat, fix, refactor, docs, style, test, or chore?

5. **Determine scope**: What part of the codebase is affected?

6. **Write summary**: Brief, imperative description (under 50 chars)

7. **Add body**: Explain why, not just what

8. **Note breaking changes**: If applicable

9. **Commit changes**: `git commit -m "message"`

10. **Push to remote**: `git push` (always push after committing)

## Full workflow example

```bash

# 1. Stage all changes

git add .

# 2. Review what's staged

git diff --staged

# 3. Commit with generated message

git commit -m "type(scope): description"

# 4. Push to remote

git push

```

## Amending commits

Fix the last commit message:

```bash

# Amend commit message only

git commit --amend

# Amend and add more changes

git add forgotten-file.js

git commit --amend --no-edit

```

## Best practices

1. **Atomic commits** - One logical change per commit

2. **Test before commit** - Ensure code works

3. **Reference issues** - Include issue numbers if applicable

4. **Keep it focused** - Don't mix unrelated changes

5. **Write for humans** - Future you will read this

## Commit message checklist

- [ ] Type is appropriate (feat/fix/docs/etc.)

- [ ] Scope is specific and clear

- [ ] Summary is under 50 characters

- [ ] Summary uses imperative mood

- [ ] Body explains WHY not just WHAT

- [ ] Breaking changes are clearly marked

- [ ] Related issue numbers are included

MCP

MCP (Model Context Protocol) is an open-source standard for connecting AI applications to external systems - like usbc for AI.

- The AI assistant (client) connects to MCP servers

- Each server provides specific capabilities (file access, database queries, API integrations)

- The AI uses these capabilities through a standardized interface

- Communication occurs via JSON-RPC 2.0 messages

{

"tools": [

{

"name": "readFile",

"description": "Reads content from a file",

"parameters": {

"path": { "type": "string", "description": "File path" }

}

},

{

"name": "createTicket",

"description": "Creates a ticket in issue tracker",

"parameters": {

"title": { "type": "string" },

"description": { "type": "string" }

}

}

]

}

Basically - kilo code will scan the list of allowed mcp tools and add their description to context. Llm can then decide to when to use them.

Some useful mcp tools:

- git(hub) - full git access

- postgres - ro sql access, schema discovery

- context7 - up to date documentation for most libraries (free for public libraries). https://context7.com/

NB! Control what mode is using what tool and what tools are enabled - context grows.

Kilo code has MCP marketplace - settings/mcp servers.

NB! Kilo code just configures and enables the tool calling - does not install actual mcp server anywhere.

For running local mcp servers - use docker.

https://docs.docker.com/ai/mcp-catalog-and-toolkit/dynamic-mcp/

Info